Retraction (15 April 2022): Greg Egan has kindly explained on Twitter that I was misinterpreting the narrator’s statements, and specifically that from the “from within” part means that morality is in part a result of human internal mental processes but that those processes of course condition on the external world. I am happy to stand corrected!

Post prior to retraction:

Greg Egan’s short story “Silver Fire” is about people falling back from secular values. It’s the near future, and organized religion is fading away but “the saccharine poison of spirituaity” is taking its place. The main character is a medical researcher, and most of the plot deals with spirituality in conflict with reliable science. In the background, the reseacher worries about her daughter, who thinks science is boring and much prefers alchemy.

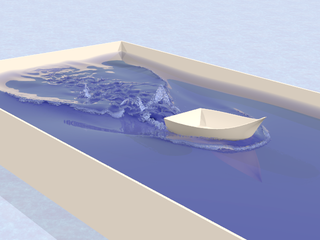

TensorFlow

TensorFlow

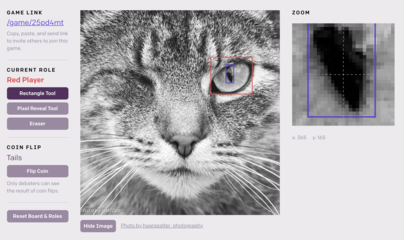

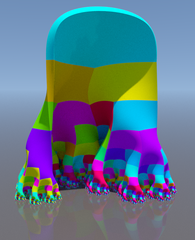

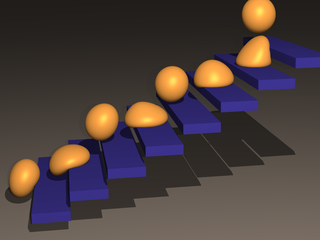

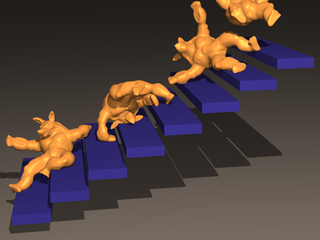

Eddy

Eddy

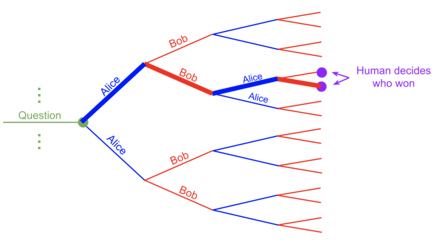

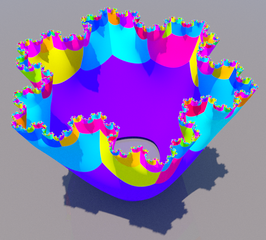

Geode

Geode